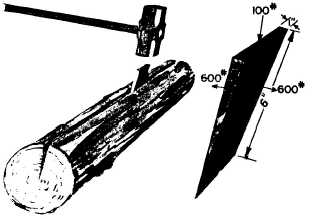

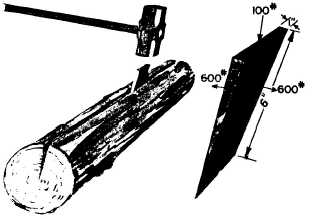

Figure 4-2.-A wedge.

driving the wedge full-length into the material to

cut or split, you force the material apart a distance

equal to the width of the broad end of the wedge.

See figure 4-2.

Long, slim wedges give high mechanical advan-

tage. For example, the wedge of figure 4-2 has a

mechanical advantage of six. The greatest value of

the wedge is that you can use it in situations in

which other simple machines won’t work. Imagine

the trouble you’d have trying to pull a log apart

with a system of pulleys.

APPLICATIONS AFLOAT AND ASHORE

A common use of the inclined plane in the Navy

is the gangplank. Going aboard the ship by

gangplank illustrated in figure 4-3, is easier than

climbing a sea ladder. You

appreciate the

mechanical advantage of the gangplank even more

when you have to carry your seabag or a case of

sodas aboard.

Remember that hatch dog in figure 1-10? The

use of the dog to secure a door takes advantage of

the lever principle. If you look sharply, you can

see that the dog seats itself on a steel wedge

welded to the door. As the dog slides upward along

this wedge, it forces the door tightly shut. This is

an inclined plane, with its length about eight

times its thickness. That means you

get a

theoretical mechanical advantage of eight. In

chapter 1, you figured that you got a mechanical

advantage of four from the lever action of the dog.

The overall mechanical advantage is 8 x 4, or 32,

neglecting friction. Not bad for such a simple

gadget, is it? Push down with 50 pounds heave on

the handle and you squeeze the door

Figure 4-3.—The gangplank is an inclined plane.

shut with a force of 1,600 pounds on that dog.

You’ll find the damage-control parties using

wedges by the dozen to shore up bulkheads and

decks. A few sledgehammer blows on a wedge will

quickly and firmly tighten up the shoring.

Chipping scale or paint off steel is a tough job.

How-ever, you can make the job easier with a

compressed-air chisel. The wedge-shaped cutting

edge of the chisel gets in under the scale or the

paint and exerts a large amount of pressure to lift

the scale or paint layer. The chisel bit is another

application of the inclined plane.

SUMMARY

This chapter covered the following points about

the inclined plane and the wedge:

The inclined plane is a simple machine that lets

you raise or lower heavy objects by applying

a small force over a long distance.

You find the theoretical mechanical advantage

of the inclined plane by dividing the length

of the ramp by the perpendicular height of

the load that is raised or lowered. The

actual mechanical advantage is equal to the

weight of the resistance or load, divided by

the force that must be used to move the load

up the ramp.

The wedge is two inclined planes set base-to-

base. It finds its greatest use in cutting or

splitting materials.

4-2